auditissimo

AI-Supported Internal Audit of IRBA Rating Models

Domain

Generative AI / Audit

Partners

Reutlingen University, msg for banking ag

Status

March 2026

Ongoing project — development and evaluation are not yet complete. The architectural decisions, results and conclusions described here reflect the state as of March 2026 and will be continuously updated as research progresses.

How well can Generative AI support internal audit in reviewing complex rating models? In the research project auditissimo, we worked with Reutlingen University and msg for banking ag to explore exactly this question — and developed a modular AI prototype that accompanies the entire audit process of IRBA rating systems step by step.

Background: A Demanding Audit Task

Credit institutions using the Internal Ratings-Based Approach (IRBA) estimate their regulatory capital parameters — probability of default (PD), loss given default (LGD) and exposure at default (EAD) — on the basis of their own statistical models. Art. 191 of the Capital Requirements Regulation (CRR) obliges internal audit to fully review these rating models at least once a year.

That sounds manageable — but in practice it is anything but. A typical validation report for a medium-sized institution references over forty individual regulatory requirements from CRR, EBA guidelines, EBA-RTS and the ECB Guide to Internal Models. Each requirement must be substantiated with specific quantitative evidence: Gini coefficient ≥ 0.40, PSI threshold values, Hosmer-Lemeshow tests, migration matrices — the list is long. Such expertise is rarely fully present in a single audit team.

This is precisely where auditissimo comes in.

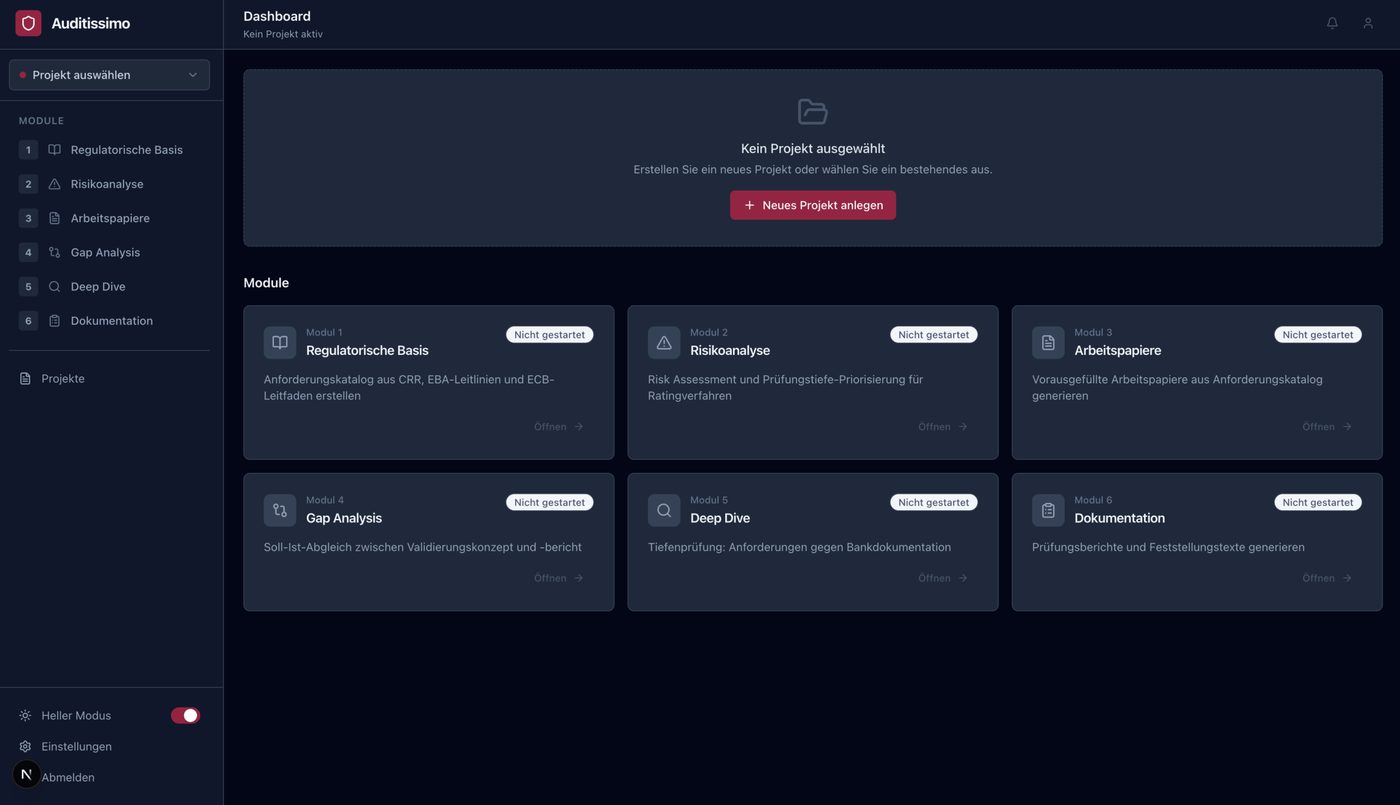

The auditissimo Architecture: Six Modules, One Continuous Audit Process

auditissimo is not a generic chat tool. The system maps the actual logic of an IRBA audit — step by step, module by module.

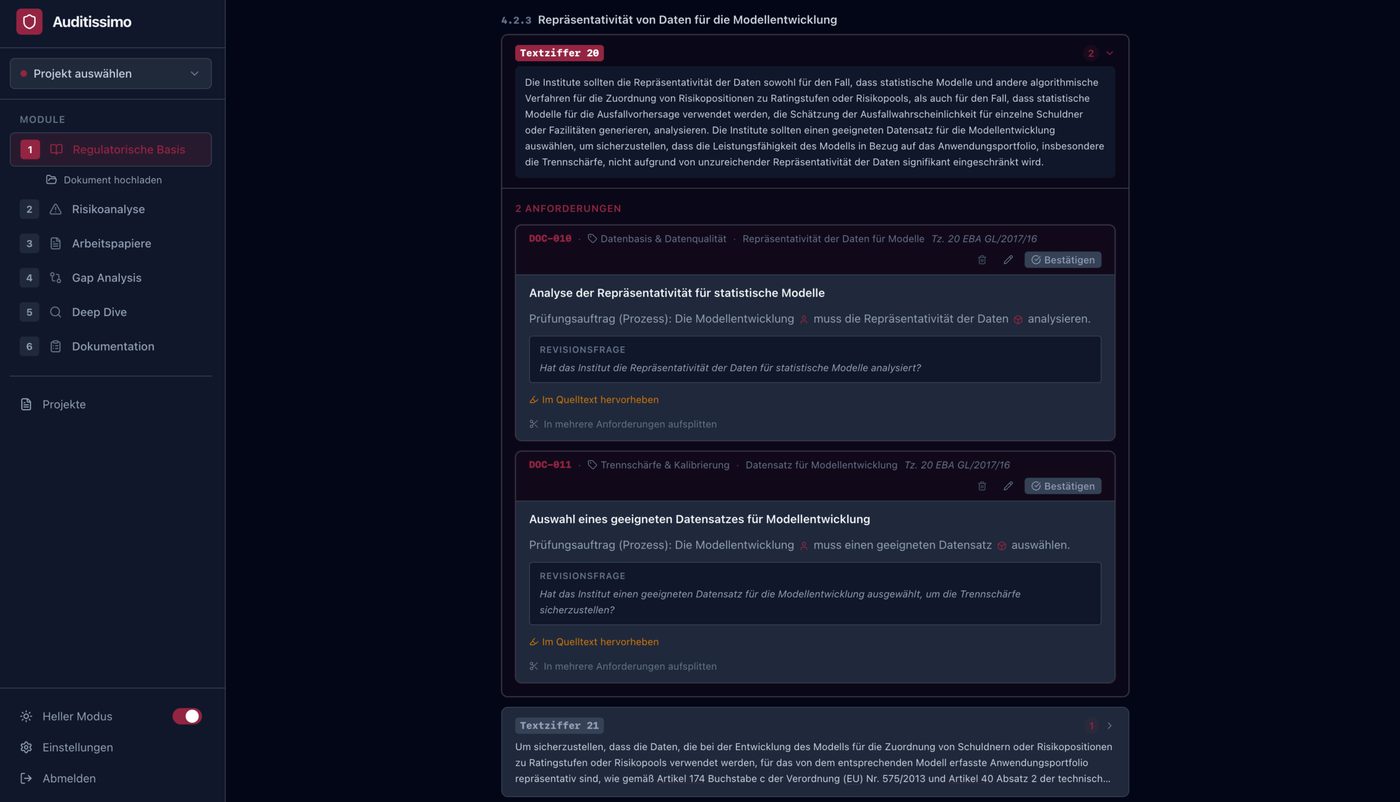

M1 — Regulatory Basis

A Single Source of Truth

Regulatory requirements for IRBA models are scattered across many documents — CRR, EBA-RTS, EBA Guidelines, ECB guides, internal policies. Module 1 reads all relevant source documents and decomposes them into atomic, testable individual requirements. Each requirement receives a unique ID, a brief description, the precise wording and its exact regulatory origin. In the current implementation, the system generates an average of 31 requirements per regulatory section — with a precision of over 90% confirmed by human reviewers.

M2 — Risk Assessment

Not all requirements carry equal weight. Module 2 evaluates each requirement using model metadata (asset class, vintage, regulatory history) and assigns it a risk score. Audit resources are thereby directed precisely at the most critical areas — fully in line with the ECB risk assessment framework.

M3 — Working Paper Initialisation

Module 3 generates pre-populated audit working papers for each selected requirement. The administrative burden of audit preparation is noticeably reduced — the audit team can focus on substantive assessment rather than document creation.

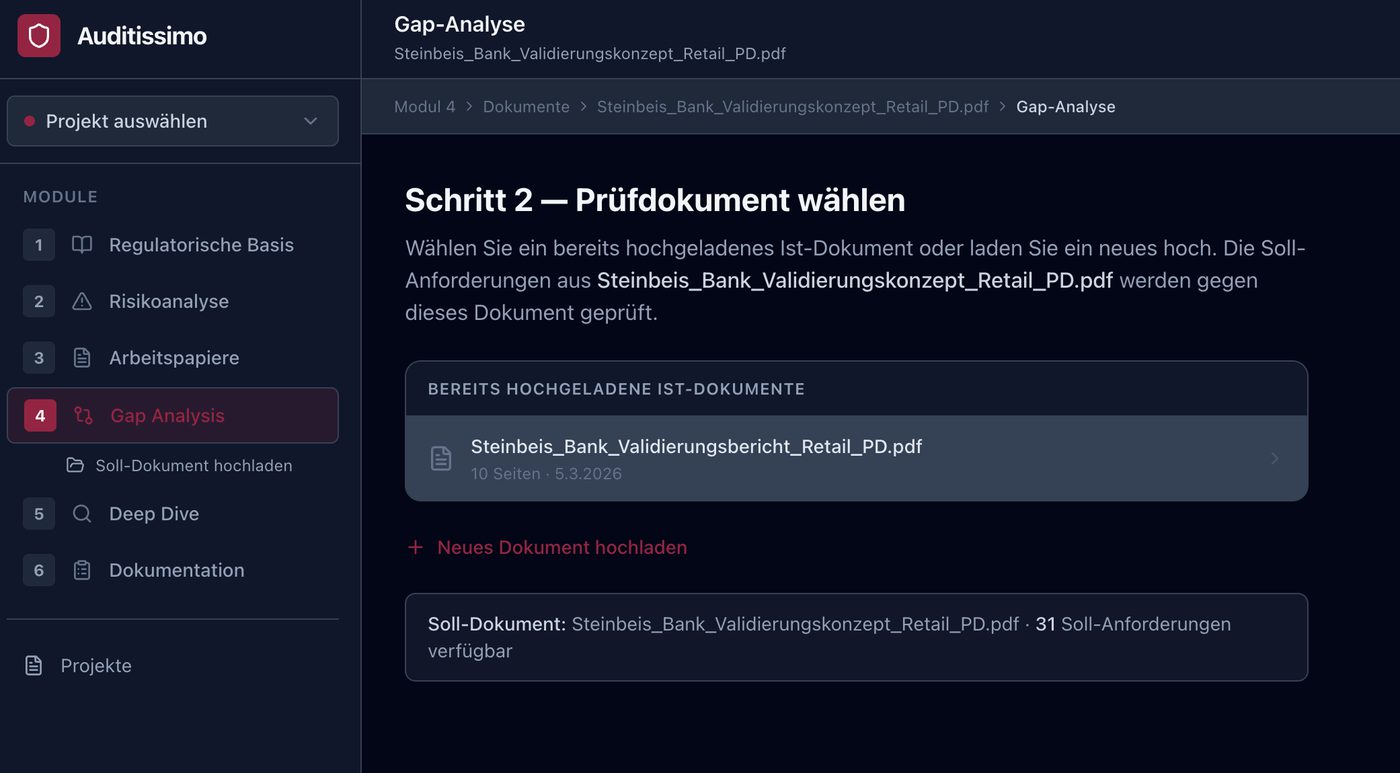

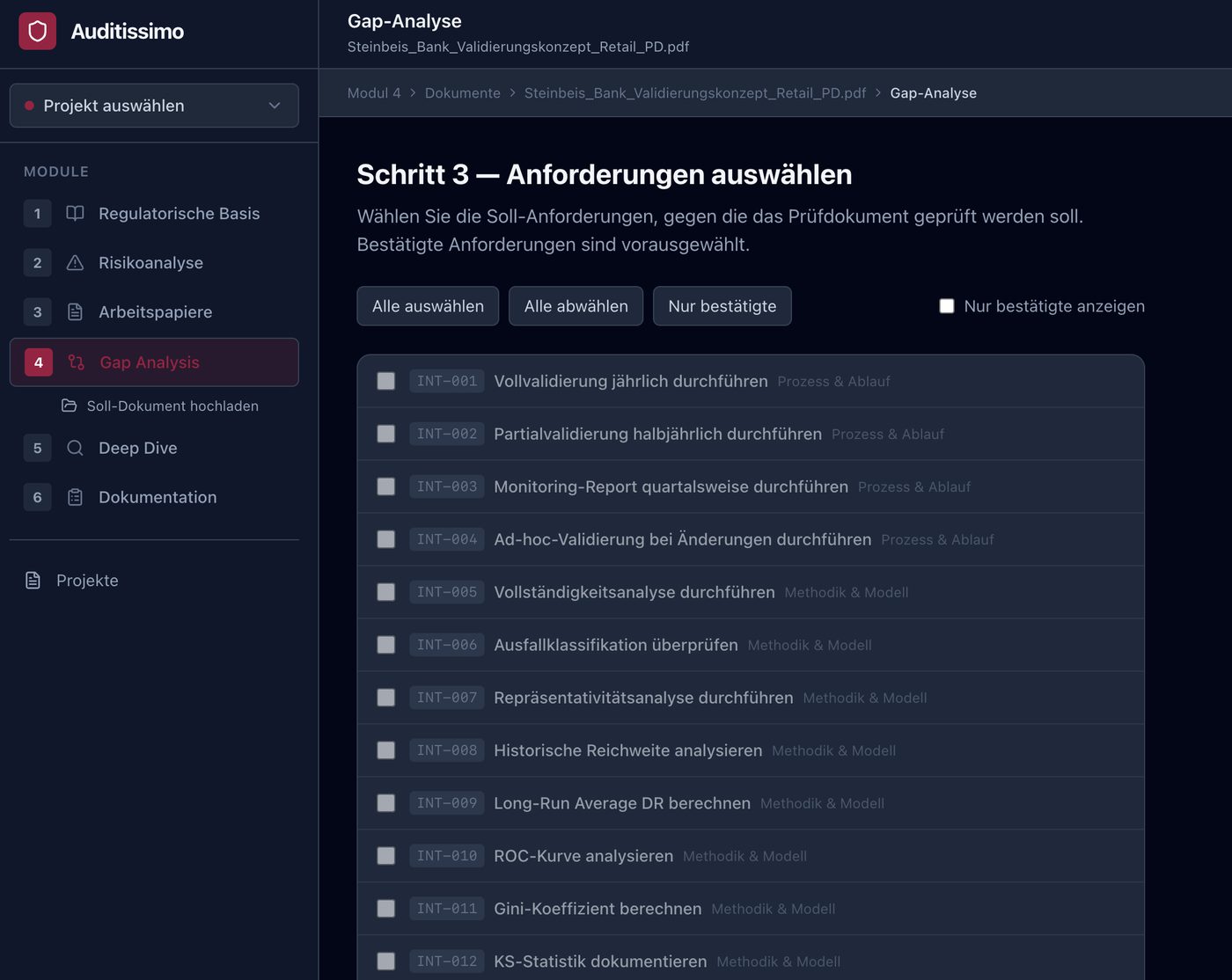

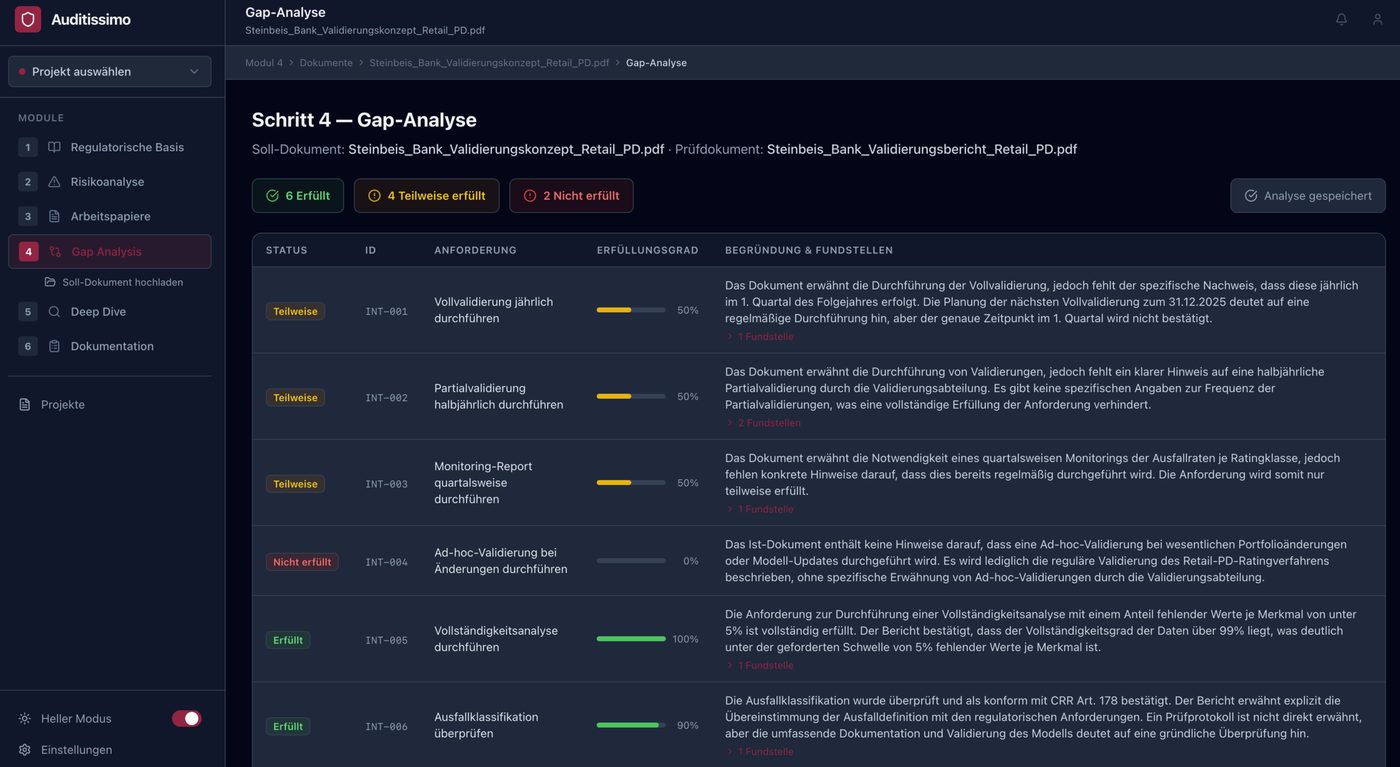

M4 — Gap Analysis

The Heart of the Audit

Module 4 compares the validation concept (what must be reviewed?) with the validation report (what was actually reviewed?). For each requirement, a three-level compliance judgement is generated:

- Compliant (80–100): Clear, complete documentary evidence available

- Partially compliant (30–79): Partial evidence, but gaps or ambiguities

- Non-compliant (0–29): No or insufficient evidence

Crucially, each AI assessment is linked to supporting passages from the audit document, so the reviewer can trace the AI's reasoning chain and override it if necessary. The temperature of the LLM calls is deliberately set to T = 0.1 — gap analysis is an evidence retrieval task, not a creative one.

M5 — Deep Dive

Requirements rated as "partially compliant" or "non-compliant" in M4 are investigated in greater depth in Module 5. The module drills into specific model outputs, datasets and calculations — for example: Is the reported Gini coefficient of 0.46 for the retail PD model consistent with the methodology specified in the validation concept?

M6 — Report and Finding Synthesis

Module 6 aggregates the findings from M4 and M5 into structured audit findings in the format of the institution's own audit management system. All outputs are explicitly declared as first drafts — the final assessment always lies with the responsible auditor.

Human-in-the-Loop: Where AI Must Have Limits

auditissimo is consistently designed to support auditor judgement — not replace it. We identify four task categories in which human decision authority is non-negotiable:

- H1 — Materiality Assessment: Whether an identified gap is material as an audit finding requires professional judgement.

- H2 — Regulatory Interpretation: For ambiguous regulatory texts, the AI may deliver a plausible but incorrect interpretation. The auditor's institutional contextual knowledge is indispensable.

- H3 — Model Methodological Assessment: The appropriateness of specific modelling decisions lies beyond the current capabilities of general-purpose LLMs.

- H4 — Audit Opinion: The overall opinion on the regulatory suitability of the rating model is a legal and professional decision that remains with the qualified auditor.

Empirical Validation: The Steinbeis Bank as Test Environment

A central methodological challenge in evaluating GenAI audit tools: there are no publicly available, annotated datasets of IRBA compliance assessments — such data is institutionally confidential. auditissimo solves this problem through a specially developed synthetic test environment: the Steinbeis Bank.

The Steinbeis Bank is a fully parameterisable, synthetic credit institution with four production-grade IRBA models (Corporate PD/LGD, Retail PD/LGD), trained on 10 years of synthetic data (2015–2024) with realistic macroeconomic cycles.

Initial Results: What the AI Can — and Cannot — Do

In the pilot, 24 requirements from the retail PD validation context and four ablation scenarios were tested:

The pattern is clear: the AI detects complete omissions almost flawlessly (F1 = 0.92). Performance drops systematically the more subtle the non-compliance becomes. The hardest cases to detect are those in which the report narratively describes a methodology without providing quantitative results — exactly the cases where experienced auditors would also spend the most attention.

Particularly revealing: a generic prompt achieves only an F1 score of 0.59. Through stepwise refinement — role specification as a bank auditor, structured output schema and embedding concrete regulatory threshold values (AUC ≥ 0.70, PSI < 0.10) — the score rises to 0.78. Domain knowledge must be built into the system architecture, not merely hinted at in the prompt.

What auditissimo Demonstrates

- Process Proximity Matters: Generic document-chat tools do not deliver actionable results for IRBA audit work. The AI must itself map the sequential logic of the audit process.

- Atomic Auditability Is Mandatory: Every AI statement must be traceable to a specific supporting passage — for transparency, for auditor override and for the regulatory audit trail.

- Governed Human Primacy: Audit conclusions must reflect the professional judgement of a qualified auditor. AI support is valuable; AI substitution is impermissible.

For a medium-sized institution with ten IRBA models, this means concretely: 300 to 400 individual requirement reviews per annual cycle. auditissimo's streaming gap analysis can complete this analysis in hours rather than weeks — with the auditor focused on the material findings rather than mechanical document matching.

Working Paper

The methodological foundations, system architecture and evaluation results of auditissimo are documented in a working paper. The paper is continuously updated with new findings from ongoing research.

Generative AI in Internal Audit

A Process-Integrated Framework for AI-Assisted Review of IRBA Rating Procedures under Art. 191 CRR

Schieborn, Reichenberger, Körwers · Working Paper, Version March 2026 · continuously updated

See It Now

auditissimo is available online at auditissimo.com. If you are interested in a personal demonstration or a conversation about deployment in your institution, we would be happy to hear from you.

Schedule a meetingContact and Live Demo

Would you like to see auditissimo in action or learn more about the application possibilities for your institution? Please do get in touch.

Prof. Dr. Dirk Schieborn

Steinbeis Transfer Centre for Data Analytics and Predictive Modelling

dirk.schieborn@steinbeis-analytics.deThe working paper "Generative Artificial Intelligence in Internal Audit: A Process-Integrated Framework for AI-Assisted Review of IRBA Rating Procedures under Art. 191 CRR" was produced in collaboration by Prof. Dr. Dirk Schieborn (Reutlingen University), Prof. Dr. Volker Reichenberger (Reutlingen University) and Tim S. Körwers (msg for banking ag). Draft version March 2026.